Let's be honest: brand safety has always been influencer marketing's uncomfortable conversation. The one we'd rather skip in favor of talking about reach, engagement, and ROI. And even the data shows it.

According to our 2026 Influencer Marketing Benchmark Report, only 4.97% of brands put brand safety/compliance as their key influencer marketing challenge for 2026. But data also show that as many as 63% of brands have encountered some form of influencer fraud in past campaigns.

In simple terms, brand safety isn't something we can skip anymore.

The landscape has shifted - structurally, not gradually - and the brands that treat brand safety as a checkbox are one bad post away from a very expensive lesson.

What Brand Safety Actually Means (And Doesn't Mean)

Brand safety in influencer marketing is about one thing: ensuring that the creators you partner with don't put your brand in a position you can't defend.

That means scanning for content linked to sensitive topics - threats, violence, sexual content, war, politics, drugs, alcohol, tobacco, and deeply divisive subject matter - whether it was posted yesterday or three years ago.

Old content doesn't stay buried. It resurfaces. Often at the worst possible moment: mid-campaign, at peak visibility, right before a product launch.

But here's what brand safety is not: it's not a reason to treat every influencer as a potential liability. Creators are human beings with opinions, histories, and growth trajectories. A controversial post from 2019 may reflect a very different person than the one you're considering working with today.

Brand safety should inform judgment; it should never replace it.

This distinction matters enormously. The goal is not to sanitize the creator ecosystem into a gallery of inoffensive beige. The goal is to give brands the context they need to make defensible, confident decisions. With humans still in the driver's seat.

Why the Stakes Just Got Higher

In January 2025, Meta announced it was significantly reducing its third-party fact-checking program in the US, replacing it with a Community Notes model and - in Zuckerberg's own words - acknowledging it would "catch less bad stuff."

That sentence should be on the wall of every brand marketing team.

Because what Meta chose to step back from, brands now have to step into. The platform safety net has shifted. What once got filtered at the source now circulates freely across Instagram, Facebook, and Threads - the exact platforms where influencer marketing lives.

For brands running campaigns at scale, this isn't just a policy update. It's a reputation risk multiplier. And it makes real, systematic brand safety infrastructure not a nice-to-have, but essential.

What AI Can Do (that Humans Simply Can't at Scale)

This is where it gets interesting.

Until recently, brand safety meant someone on your team spending hours manually scrolling through a creator's feed. Impractical for a shortlist of five. Impossible at scale. And often inconsistent - because what one person flags, another misses.

AI changes that equation fundamentally.

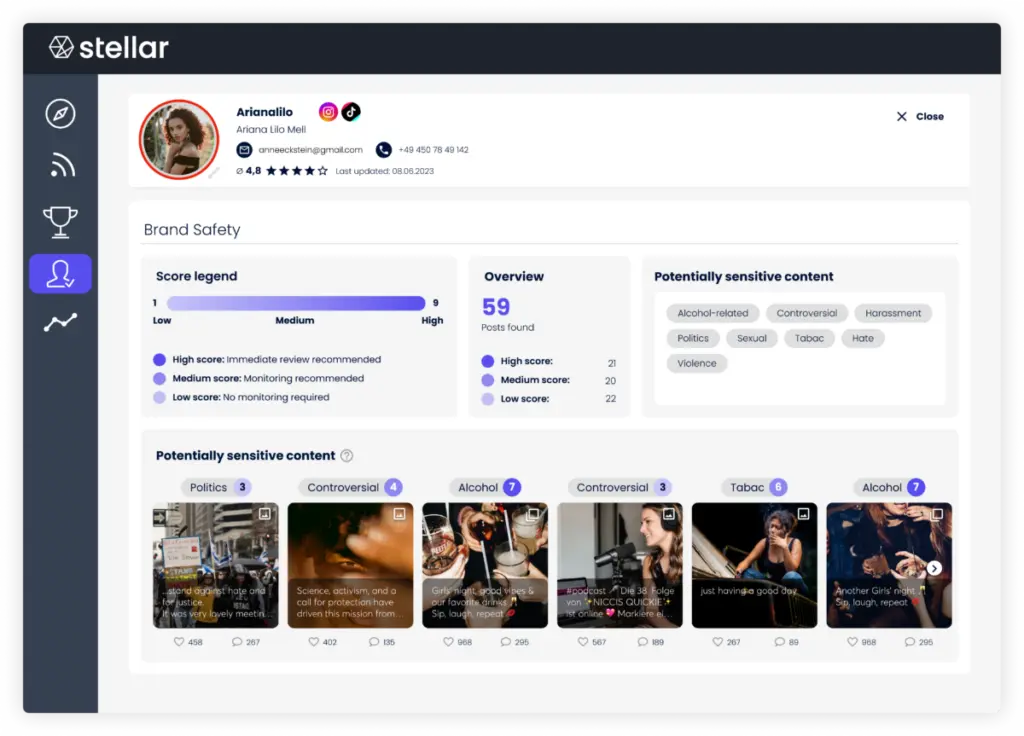

On the Stellar platform, our Brand Safety feature analyzes all available textual content on a creator's profile, across every supported social network, in any language, going back years.

It classifies content across eleven risk categories - including threats, violence, self-harm, sexual content, war, controversial topics, drugs, alcohol, tobacco, politics, and coded language (algospeak). Every detected piece of content is assigned a severity score from 0 to 9. Results are updated daily.

What would take a human team days gets surfaced in minutes. Not to make the decision for you - to give you the context to make it yourself.

But textual analysis is just the beginning.

As Briac Le Guillou, Data Scientist at Stellar Tech, explains:

Multimodal AI - models that simultaneously process text, images, video, and audio - means we can now analyze what's actually in a creator's content, not just the caption they wrote about it.

A post with a perfectly neutral caption can contain visual elements that would make a brand deeply uncomfortable. We can see that now. We couldn't, reliably, before.

This represents a structural leap in what brand safety can actually detect - and how early it can detect it.

Brand Safety Is A Piece Of A Bigger Puzzle

Here's a truth the industry sometimes misses: a creator can pass every brand safety check and still be the wrong partner.

That's why at Stellar, we've built brand safety as one layer within a broader protection framework:

Partnership Disclosure Monitoring

Are creators properly labeling sponsored content? Regulatory frameworks like ARPP in France or IMA in Belgium require transparent disclosure. AI can now automatically flag whether disclosures are present, consistent, and compliant - reducing legal and reputational exposure.

Audience Credibility Analysis and Fake Detection

Inflated follower counts and artificial engagement aren't just a performance problem. They're a brand safety problem. If the audience isn't real, the impact isn't real — and you've paid for it.

AI-powered audience analysis examines engagement patterns, follower growth curves, and comment authenticity to surface hollow reach before it becomes a post-campaign disappointment.

Tone of Voice Alignment

A creator can be technically brand-safe and completely brand-wrong. A brand positioning itself as reassuring and family-friendly, partnering with a creator known for dark humor and provocation isn't a safety crisis - it's just a bad fit. Evaluating editorial alignment is part of responsible creator selection.

Creator Certification

At Stellar, certified creators - whether through ARPP in France or the Influencer Marketing Alliance in Belgium are transparently displayed and filterable. Working with certified creators is increasingly a signal of due diligence in markets where regulation is tightening fast.

Together, these layers answer the questions that matter before you commit: Is this creator genuinely brand-safe? Is their audience real? Are they legally compliant? Do they feel right for our brand?

The Paradox at the Heart of This

Here's the thing I find genuinely fascinating - and slightly uncomfortable - about all of this.

AI creates the risks we're trying to protect against. Deepfakes. AI-generated misinformation at scale. Synthetic content that's indistinguishable from real. Bot networks are flooding platforms with fake reviews.

These threats exist because the same technology that builds our defenses can be turned into a weapon.

And yet.

Only AI can protect brands at the scale that modern influencer marketing demands. Manual monitoring can't keep up with the volume, speed, or multilingual complexity of today's creator landscape. Human teams can't review years of content across platforms in minutes. T

hey can't detect subtle visual risks buried in video. They can't continuously monitor campaigns in real time across a dozen creators at once.

The answer to AI risk is not less AI. It's better AI: with humans in control of the decisions it informs.

As I've said before: the machine proposes, but humans dispose. AI accelerates, structures, and flags. You decide. That's not a limitation of the technology — it's the design principle.

Brand safety done right is not about saying "no" faster. It's about saying "yes," with confidence.

What This Means For You Right Now

If you're running influencer campaigns and your brand safety process still relies primarily on manual checks and instinct, you're exposed. Not eventually. Now.

The Meta policy shift has already changed the content environment. The EU AI Act, in force since August 2024, is already tightening transparency obligations for AI-generated content.

Audience fraud hasn't gone away. Creator ecosystems are increasingly complex, with influencers running startups, investing in ventures, and building communities that brands become associated with, whether a campaign is live or not.

The brands that will navigate this confidently are the ones investing now in systematic, AI-powered creator intelligence, not as a compliance exercise, but as a genuine competitive advantage.

Because brand safety, done well, doesn't slow you down. It frees you up to move faster.